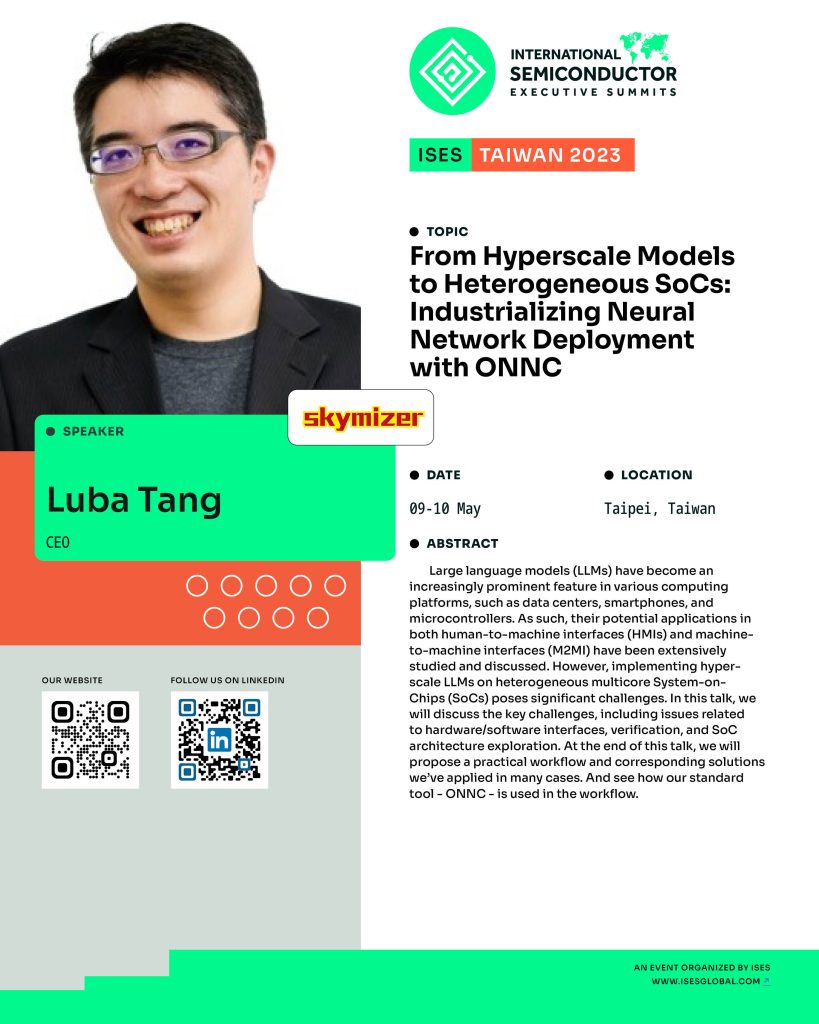

From Hyperscale Models to Heterogeneous SoCs: Industrializing Neural Network Deployment with ONNC

Large language models (LLMs) have become an increasingly prominent feature in various computing platforms, such as data centers, smartphones, and microcontrollers. As such, their potential applications in both human-to-machine interfaces (HMIs) and machine-to-machine interfaces (M2MI) have been extensively studied and discussed. However, implementing hyper-scale LLMs on heterogeneous multicore System-on-Chips (SoCs) poses significant challenges.

In this talk, we will discuss the key challenges, including issues related to hardware/software interfaces, verification, and SoC architecture exploration. At the end of this talk, we will propose a practical workflow and corresponding solutions we’ve applied in many cases. And see how our standard tool – ONNC – is used in the workflow.