Request a

Business Customized Quotation

+886 2 8797 8337

12F-2, No.408, Ruiguang Rd., Neihu Dist., Taipei City 11492, Taiwan

Center of Innovative Incubator R819, No. 101, Section 2, Kuang-Fu Road, Hsinchu, Taiwan

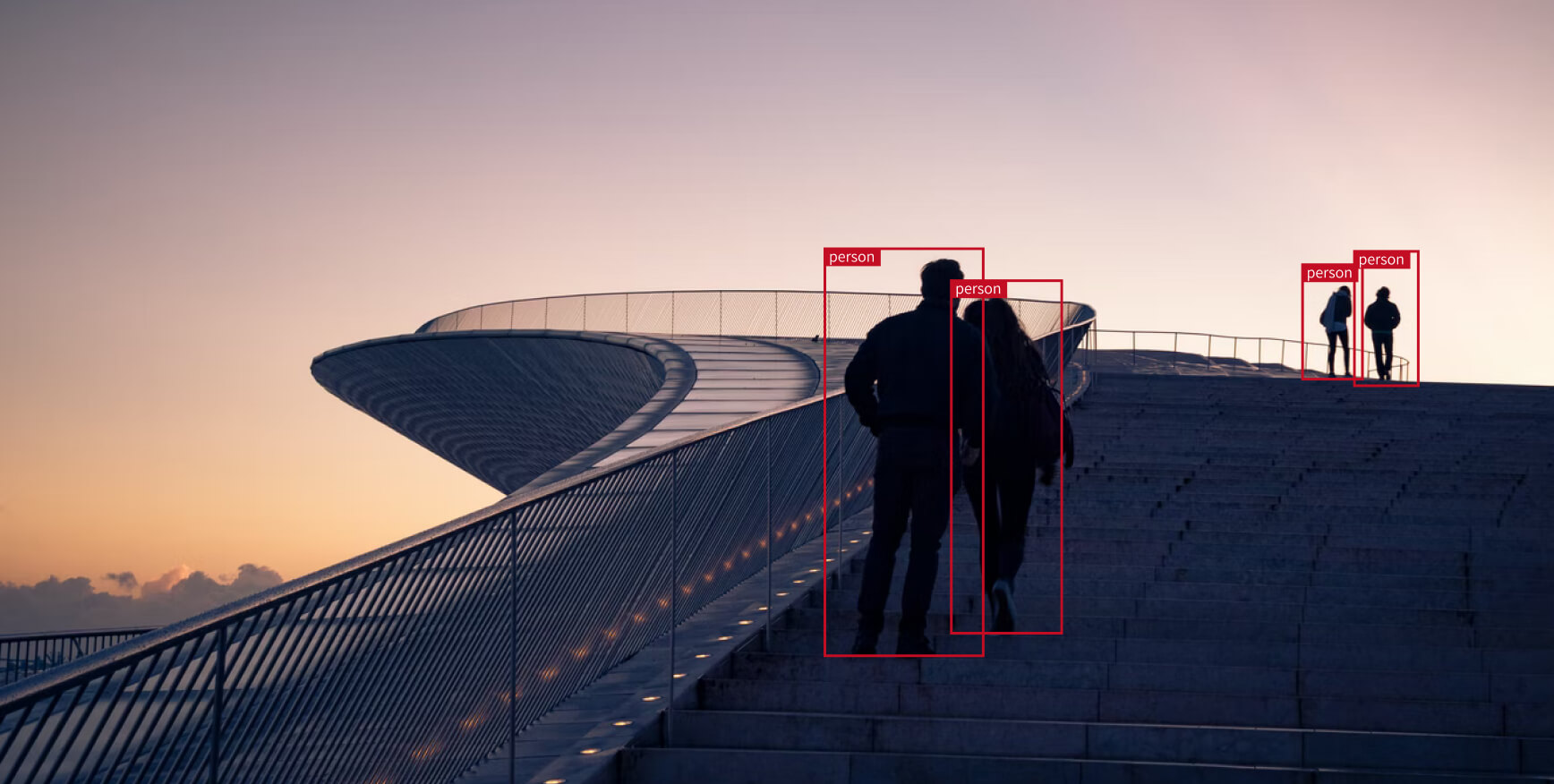

Surveillance

About_

Heterogeneous Computing in Intelligent Surveillance

One of the most important benefits of AI-equipped surveillance cameras is the ability to detect people or faces, which is important in high-security areas where a human presence needs to be quickly identified. The core IC Chips in such AI cameras benefit from heterogeneous computing, which provides perfect balance between power consumption and performance. However, heterogeneous computing leads to software fragmentation, and many companies spent enormous R&D resources but still cannot deliver products on time. ONNC provides a full-fledged software toolchain, includes Compiler, Calibrator, Runtime and Virtual Platform, for chip providers in intelligent surveillance industry, and helps them shorten time-to-market.

Challenges of Heterogeneous Computing for AI SoCs

Smart Cameras usually adopt a heterogeneous computing architecture, which is composed of CPUs, GPUs and DLAs. Heterogeneous computing is the use of more than one type of processing units (PEs) in a single System on Chip(SOC). The most common type of heterogeneous computing in Smart Cameras is the combination of central processing units (CPUs), graphics processing units (GPUs) and Deep Learning Accelerators (DLAs). DLA is an AI accelerator that designed for highly efficient computation for Deep Learning inference with low power consumption. Such heterogeneous architecture can provide high computing power while minimize power consumption because different types of computation are delegated to specific befitting PEs.

However, hundreds of DLA lead to software fragmentation and software team are suffering from it. Every DLA has its own diverse and unique HW/SW interfaces, thus, developing software development kit, such as Compiler and Runtime, is tough. Many companies spent thousands of man-years and still can not deliver product on the shelf because of the above-mentioned reason.

ONNC Provides a Full-fledged Software Toolchain for AI Chips

ONNC is a portable turnkey solution of software development kit targeting on such heterogeneous multicore system. It includes Compiler, Calibrator, Runtime and Virtual Platform.

ONNC Compiler

ONNC Compiler is designed for transform neural networks into diverse machine instructions of different PEs, variant DMAs, hierarchical bus system, high-speed I/O and fragmented memory subsystem in such heterogeneous multicore system. ONNC Compiler has ability to coordinate multiple diverse PEs in AI chips for Smart Cameras.

ONNC Calibrator

ONNC Calibrator is a Post Training Quantization(PTQ) technique helps AI Chip design teams keep high precision during quantization. ONNC Calibrator provides architecture-aware quantization that helps AI Chips maintain 99.99% precision even in INT8 mode. Besides, ONNC Calibrator is designed for modern architectures and heterogeneous multicore devices.

ONNC Forest Runtime

ONNC Forest Runtime executes compiled neural network models. It is retargetable and has modular architecture that supports diverse hardware platforms, including TinyML, mobile and datacenter. ONNC Forest Runtime supports dynamic shapes with hot batching technology. Its unique bridging technology allows multiple applications using a common accelerator and coordinates tasks among multiple accelerators.

ONNC Virtual Platform

ONNC Virtual Platform is a hardware/software co-design tool to reduce time and complexity in the early-stage development process. It composes of basic components in SoC such as CPU, Bus, Memory, and Peripherals. ONNC Virtual Platform adopts popular simulator tools such as QEMU and TLMv2 models for bus/memory etc. It provides generic templates for architectural models so that customers can easily build their own behavior or transactional models of hardware components.

It is not easy to implement system software from scratch. This is because there are many components that need to be taken into account when doing so. However, with the help of ONNC, you can easily focus on the core algorithms that truly distinguishes your AI chip. This is because ONNC contains all components you need for developing system software of AI chips.

Achievement_

Benefits of Adopting ONNC with your AI Chips

To sum up, ONNC provides a full-fledged software toolchain for AI Chip providers. With ONNC, AI chip providers will be benefited from:

Shorten Time-to-Market

Enable any AI SoC to be easily adopted in frequently evolved end applications by automatically compiling AI models into machine codes that the AI SoC can run more efficiently.

Enhance Performance

Improve speed, reduce memory usage and increase inference precision by leveraging all computing/memory resources in the complex heterogeneous multicore system.

Achieve Hardware/Software Co-optimization

Align software development at much earlier stage during SoC architecture exploration stage. And reserve flexibility for possible changes in future AI applications.

Reach out today to see how ONNC can meet your needs of AI system software.